5 interesting ways to use a local LLM with MCP tools

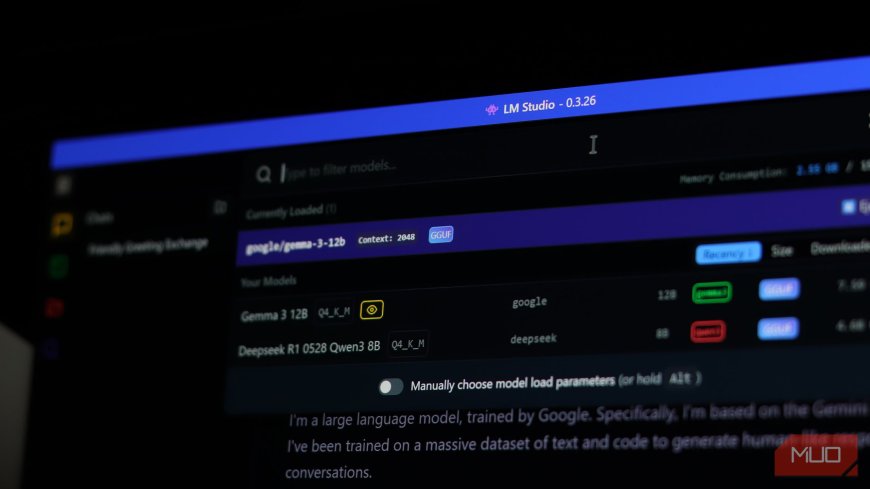

If you've been running a local LLM through Ollama or LM Studio, you already know the appeal—privacy, zero API costs, and full control over your AI stack. But a local LLM by itself is trapped inside a terminal window. It can generate text and analyze whatever data you provide, but it can't do anything in the real world.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0