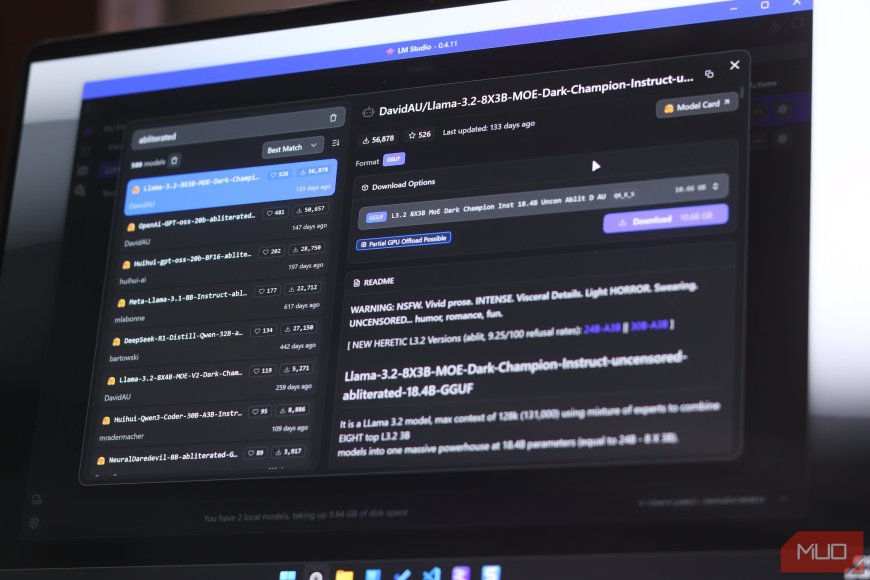

I tried an abliterated local LLM and it feels nothing like the others

Local LLMs are great for privacy; they run locally and require no subscription. That is, until you realize they suffer the same problems their cloud counterparts do: extremely restricted safety guardrails. Safety measures online are one thing, but if you're running your AI model locally, bypassing them should be easy, right?

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0